Most AI products look great in demos. I’ve shipped enough of them in production to know that’s the easiest part.

The real challenges show up later. Metrics move in ways you did not expect. User behavior shifts. Systems drift from what you originally designed. What looks impressive in isolation often fails quietly in the real world.

And yet, most creative AI products are still evaluated on novelty, UX polish, or output quality. These are useful early signals, but they do not tell you whether a system will hold under real usage.

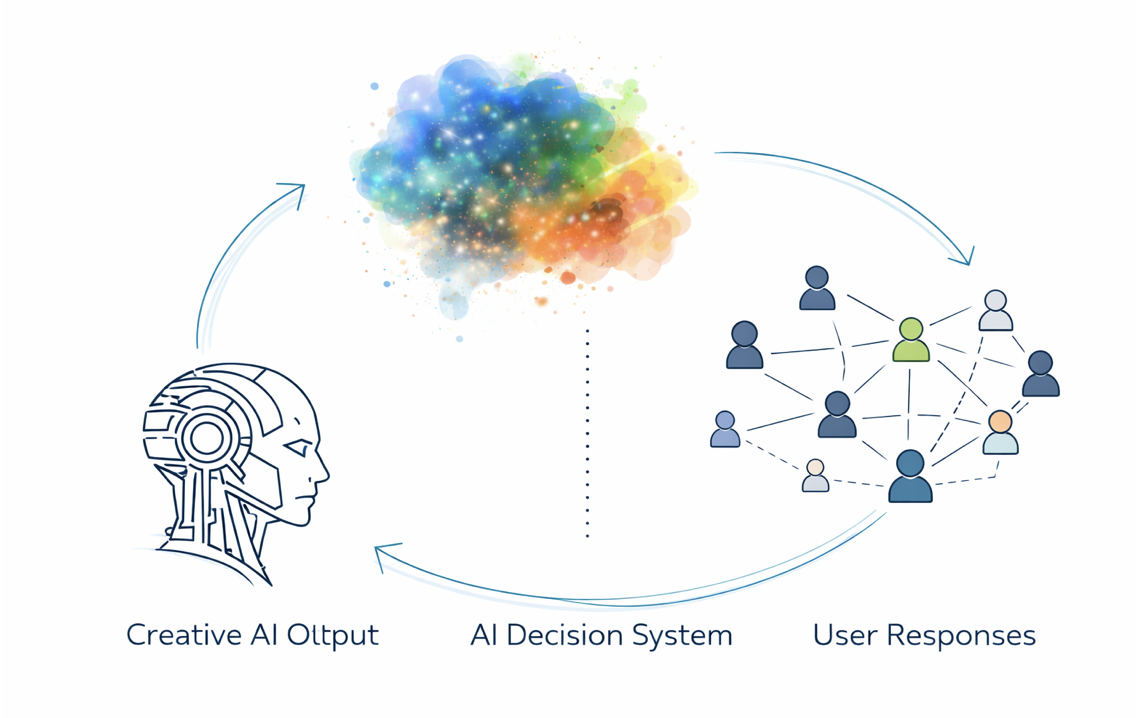

The gap is simple. AI products are not just features. They are decision systems. They continuously decide what to generate, what to show, and how to adapt based on user behavior. If you evaluate them like static products, you miss what actually matters.

This is the framework I use to evaluate creative AI systems beyond the demo.

Product Intentionality

The first question is whether AI is actually doing the work.

I’ve seen many products where AI is layered on top of an existing workflow. It generates outputs, but the core experience remains unchanged. These products tend to plateau quickly. The difference shows up when AI drives the system itself.

In one of the ranking systems I worked on, the model determined what content was eligible, how it was ordered, and how different user intents were handled. During a degradation event, we fell back to a simpler baseline. The system did not crash. Pages still loaded. Results were still returned.

But the experience broke. Users saw generic, repetitive outputs. Diversity collapsed. Engagement dropped.

The system was functional, but no longer useful. That is when you know AI is not an enhancement. It is the engine.

Simple test:If the AI underperforms, does the product degrade slightly or fundamentally lose its value?

Visibility And Allocation

Most teams focus on generation. Very few focus on distribution. In practice, what gets shown matters more than what gets generated.

I’ve worked on systems where improving model quality had limited impact because the real issue was allocation. Small changes in ranking or selection logic shifted outcomes far more than improvements in generation. AI products behave the same way.

Two systems can generate equally strong outputs. The one that wins is the one that decides what to surface, to whom, and when.

Evaluation should include: How does the system decide what gets seen?

Because that decision layer is the product.

Systemic Feedback Loops

Every AI system changes user behavior. That behavior feeds back into the system. This creates feedback loops.

I’ve seen systems optimize for engagement and slowly drift toward extreme or repetitive patterns. Not because the model was wrong, but because the system kept reinforcing what users reacted to.

Users adapt to the system. The system adapts to users. Over time, the product becomes something no one explicitly designed.

This is especially relevant in AI, where exploration and variation matter.

Key question:What behaviors does the system reward, and what happens when those behaviors compound?

If you cannot answer this, you are not evaluating long-term behavior.

Marketplace Incentives

AI products rarely operate in isolation. They sit inside ecosystems. Users, creators, platforms, and sometimes advertisers all interact through the system. AI changes how value flows between them.

In marketplace systems I’ve worked on, even small changes in ranking logic shifted seller behavior, pricing strategies, and supply quality. The model did not just optimize outcomes. It reshaped the ecosystem.

AI platforms follow the same pattern.

If incentives favor volume, you get spam.

If they favor engagement, you get distortion.

If they do not reward quality, quality disappears.

AI amplifies whatever incentive structure you design.

Evaluation should ask: Who benefits as the system scales, and who gets left behind?

Scaling Moats

Most AI products work well in controlled environments. That is not the bar. The real test is what happens under scale.

As usage grows, systems face:

higher variance in outputs more edge cases latency and reliability constraints

I’ve seen systems that performed well offline fail in production because they could not handle this complexity. The key question is whether the system improves with usage or degrades.

Strong systems learn and stabilize. Weak systems become noisier. That difference is your moat.

From Features To Systems

The shift for creative AI builders is simple.

Stop evaluating outputs. Start evaluating behavior.

Stop asking if the demo works. Start asking what the system becomes over time.

Because the products that win are not the ones that look the most impressive on day one. They are the ones that remain useful, reliable, and aligned as they scale. That is the difference between a prototype and a product.